Python is such a fun and powerful language. Sure, it can be messy. But it makes up for it with speed and power. Below is a handy example of the power of asynchronous call functionality that can be employed with asyncio.

Well that was fun. Anyway, here's a simple script that leverages

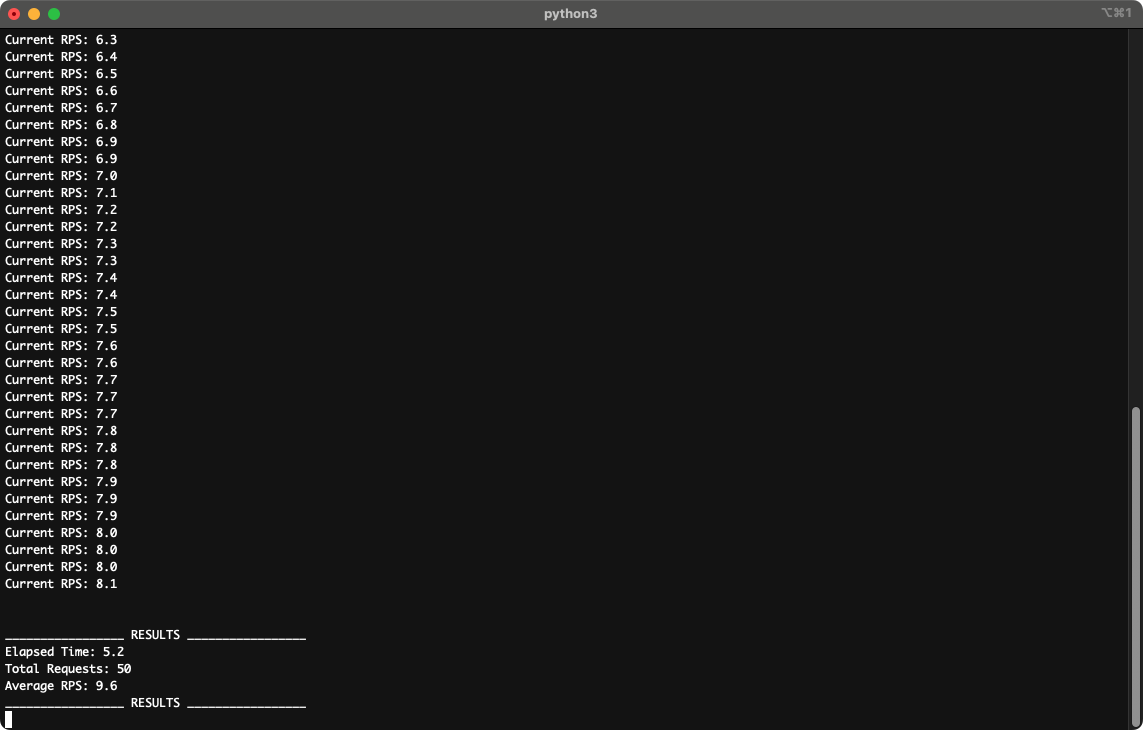

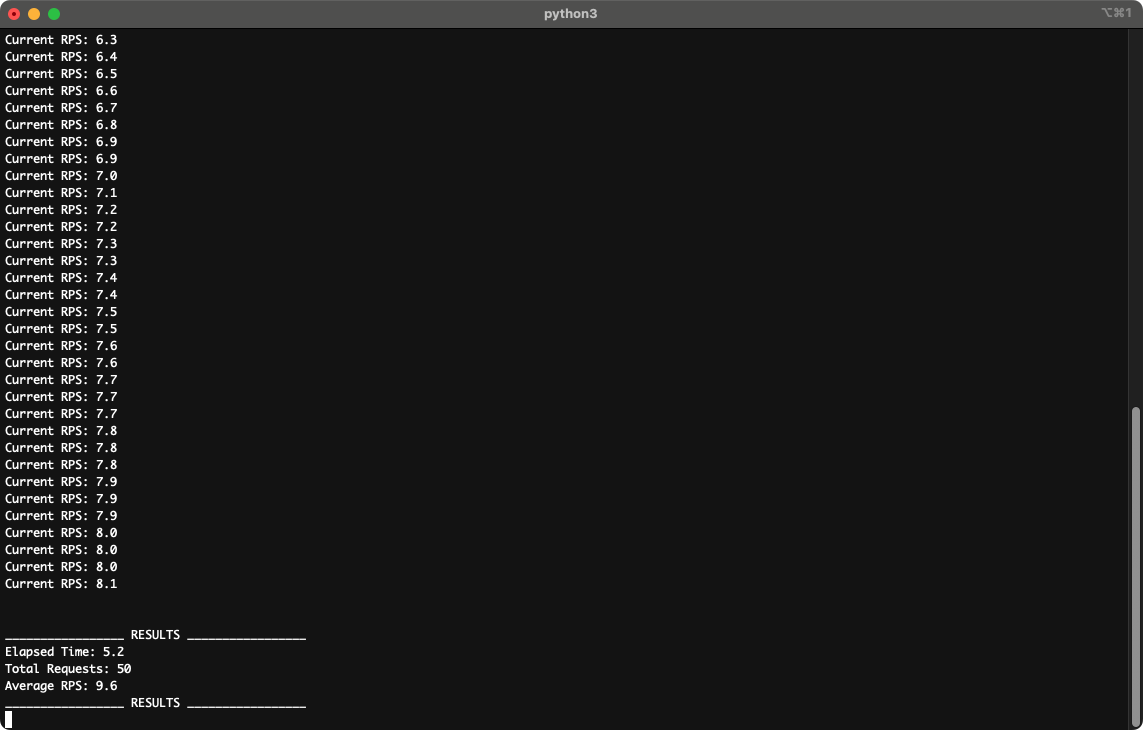

Run a load test with 10 Requests per second, for 5 seconds, generating 50 requests:

And we can see from the above that we averaged 9.6 RPS over 5.2 seconds. Amazing! Now, point the script at your service and try turning up the numbers.

"Asyncio, oh asyncio, a twisty thread, where coroutines dance and futures are bred. We "await" with bated breath, while the event loop spins, juggling tasks like digital, caffeinated twins. But beware the blocking call, a villain in disguise, it'll freeze your loop solid, and bring tears to your eyes. Debugging's a riddle, a mystical, strange quest, where "pending" futures mock you, and put your skills to the test. Yet, when it all clicks, and the code flows so free, you'll feel like a maestro, conducting a symphony."

Well that was fun. Anyway, here's a simple script that leverages

asyncio to call Google:loader.py#!/usr/bin/python

import argparse, subprocess, time, asyncio, requests

default_rps = 1

default_duration = 5

async def do():

#Call google's homepage, disable HTTPS Cert verification, because who cares?

requests.get('https://google.com', verify=False)

return

async def do_loop(rps, duration):

start_time = time.time()

total_requests = 0

actual_rps = rps * 1

times_to_run = round(actual_rps * duration)

for i in range(times_to_run):

time.sleep(1/actual_rps)

asyncio.ensure_future(do())

total_requests += 1

view_rps(start_time, total_requests)

end_time = time.time()

elapsed_time = end_time - start_time

average_rps = total_requests / elapsed_time

print("\n")

print("_________________ RESULTS _________________")

print("Elapsed Time:", round(elapsed_time, 1))

print("Total Requests:", total_requests)

print("Average RPS:", round(average_rps, 1))

print("_________________ RESULTS _________________")

def view_rps(start_time, total_requests):

seconds_ran = (time.time() - start_time) + 1

current_rps = total_requests / seconds_ran

print("Current RPS:", round(current_rps, 1))

This is a basic script that clones all repo's in a group.

if __name__ == "__main__":

# Parse arguments passed into this command.

parser = argparse.ArgumentParser()

parser.add_argument("-r", help="Rate Per Second.", type=int)

parser.add_argument("-d", help="Duration in Seconds.", type=int)

args = parser.parse_args()

# Determine if a caching version argument was passed.

if args.r == None:

args.r = default_rps

# Determine if a caching version argument was passed.

if args.d == None:

args.d = default_duration

print("Starting Process...")

print("Estimated RPS: %s" % args.r)

print("Estimated Duration: %ss" % args.d)

loop = asyncio.new_event_loop()

loop.run_until_complete(do_loop(args.r, args.d))

Run a load test with 10 Requests per second, for 5 seconds, generating 50 requests:

python3 loader.py -r 10 -d 5

And we can see from the above that we averaged 9.6 RPS over 5.2 seconds. Amazing! Now, point the script at your service and try turning up the numbers.